RSS 2017 Workshop on Integrated Task and Motion Planning

Key Facts

- Workshop Date: Saturday, July 15, 2017 at RSS 2017

- Workshop Location: Stata Center, Rm 123, Massachusetts Institute of Technology, 32 Vassar St., Cambridge, MA, USA.

- Poster Submission Deadline:

May 27, 2017 - Poster Acceptance Notification:

June 01, 2017 - Organizers:

Table of Contents

Schedule

| 09:15-09:30 | Coffee and Light Refreshments |

| 09:30-09:35 | Workshop Introduction |

| 09:35-10:05 | Daniele Magazzeni: Temporal Reasoning in Task-Motion Planning |

| 10:05-10:35 | Rajeev Alur: Reactive Synthesis for Distributed Systems |

| 10:35-11:00 | Coffee Break |

| 11:00-11:30 | Nicholas Roy: Abstraction, Optimality and Symbols |

| 11:30-12:00 | Tomás Lozano-Pérez: From TAMP to mobile manipulation |

| 12:00-02:00 | Group Lunch and Discussion |

| 02:00-02:30 | Georgios Fainekos: On-line and off-line Temporal Logic Planning under Incomplete or Conflicting Information |

| 02:30-03:00 | Jan Rosell: Manipulation planning for robot co-workers |

| 03:00-03:30 | Coffee Break |

| 03:30-04:00 | Vasu Raman (Industry Perspective) |

| 04:00-04:07 | Poster Lightning Talks |

| 04:07-04:45 | Poster Session |

| 04:45-05:45 | Benchmark and Panel Discussion |

| 05:45 | Informal Dinner |

Description

Complex robot behavior requires not only paths to navigate or reach objects, but also decisions about which objects to reach, in what order, and what style of action to perform. Such decisions combine the need for continuous, collision-free motion planning with the discrete actions of task planning. Efficient algorithms exist to solve these parts in isolation; however, integrating task and motion planning presents algorithmic challenges in generality, scalability, completeness. Task-Motion Planning (TMP) is an integrated approach to this challenge which has developed in the traditional robotics community. With this workshop, we hope to strengthen connections to the AI and formal methods communities.

Challenges in TMP planning arise from the interaction of task and motion layers. Task actions affect motion planning feasibility, and motion plan feasibility dictates the ability to perform task actions. Current work on task and motion planning has achieved good performance by focusing on specific types of actions or solving expected-case scenarios, and ongoing advances are improving completeness, generality, and optimality.

Objectives

The goal of this workshop is to highlight recent applications and explore new methods for combining task and motion planning, looking beyond the traditional robotics community find connections to work in AI and cyber-physical systems. We hope to identify new abstractions and algorithms for complex robot action. From this workshop, we expect participating researchers to identify and address important challenges, techniques, and benchmarks necessary for combining task and motion planning.

Audience

This workshop is intended for researchers in robotics, AI planning, and motion planning who are interested in improving the autonomy of robots for complex tasks such as manipulation.

The two main target audiences for the workshop are: (1) members actively researching new methods, future trends and open questions in task and motion planning (2) people who are interested in learning about the current state-of-the-art in order to incorporate these methods into their own projects. We strongly encourage the participation of graduate students.

Speakers

Rajeev Alur: Reactive Synthesis for Distributed Systems

Rajeev Alur is the Zisman Family Professor in Department of Computer and Information Science at the University of Pennsylvania. His research spans three subdisciplines in computer science: theoretical computer science (topics such as automata, logics, concurrency, and models of computation); formal methods in system design (topics such as computer-aided verification, software analysis, and system synthesis); and cyber-physical systems (topics such as embedded controllers, real-time systems, and hybrid systems). He is a member of Penn’s PRECISE Center and PL Club.

Georgios Fainekos: On-line and off-line Temporal Logic Planning under Incomplete or Conflicting Information

Abstract: Temporal logic planning methods have provided a viable path towards solving the single- and multi-robot path planning, control and coordination problems from high level formal requirements. In the existing frameworks, the prevalent assumption is that there is a single stakeholder with full or partial knowledge of the environment that the robots operate in. In addition, the requirements themselves are fixed and do not change over time. However, both of these assumptions may not be valid in both off-line and on-line temporal logic planning problems. That is, multiple stakeholders and inaccurate sources of information may produce a self-contradictory model of the world or the system. Classical planning temporal logic methods cannot handle non-consistent model environments even though such inconsistencies may not affect the planning problem for a given formal requirement. In this work, we show how such problems can be circumvented by utilizing multi-valued temporal logics and system models.

Bio: Georgios Fainekos is an Associate Professor at the School of Computing, Informatics and Decision Systems Engineering (SCIDSE) at Arizona State University (ASU). He is director of the Cyber-Physical Systems (CPS) Lab and he is currently affiliated with the NSF I/UCR Center for Embedded Systems (CES) at ASU. He received his Ph.D. in Computer and Information Science from the University of Pennsylvania in 2008 where he was affiliated with the GRASP laboratory. He holds a Diploma degree (B.Sc. & M.Sc.) in Mechanical Engineering from the National Technical University of Athens and an M.Sc. degree in Computer and Information Science from the University of Pennsylvania. Before joining ASU, he held a Postdoctoral Researcher position at NEC Laboratories America in the System Analysis & Verification Group. He is currently working on Cyber-Physical Systems (CPS) and robotics. In particular, his expertise is on formal methods, logic, artificial intelligence, optimization and control theory. His research has applications on automotive systems, medical devices, autonomous (ground and aerial) robots and human-robot interaction (HRI). In 2013, Dr. Fainekos received the NSF CAREER award. He was also recipient of the SCIDSE Best Researcher Junior Faculty award for 2013 and of the 2008 Frank Anger Memorial ACM SIGBED/SIGSOFT Student Award.

Tomás Lozano-Pérez: From TAMP to mobile manipulation

Abstract: Our goal is to enable robots to carry out complex tasks, such as cleaning a house or making dinner, fully autonomously. This goal requires integrated Task and Motion Planning (TAMP) since the way that the task is to be accomplished depends intimately on the robot kinematics and the shape and placement of objects in the environment. There has been recent progress in addressing the TAMP problem, primarily in settings where the actual state is observable. However, in a real robot, uncertainty is pervasive. Just like kinematics and geometry can have a large impact on how a task is to be carried out, uncertainty has arguably a greater impact. In particular, the robot must reason about how to acquire and interpret sensory information. We have been pursuing an approach to TAMP under uncertainty built on belief-space planning. In this talk I will review recent progress in this direction.

This is joint work with Leslie Pack Kaelbling

Bio: Tomas Lozano-Perez is currently the School of Engineering Professor in Teaching Excellence at the Massachusetts Institute of Technology (MIT), USA, where he is a member of the Computer Science and Artificial Intelligence Laboratory. He has been Associate Director of the Artificial Intelligence Laboratory and Associate Head for Computer Science of MIT’s Department of Electrical Engineering and Computer Science. He was a recipient of the 2011 IEEE Robotics Pioneer Award and a 1985 Presidential Young Investigator Award. He is a Fellow of the Association for the Advancement of Artificial Intelligence (AAAI) and a Fellow of the IEEE.

His research has been in robotics (configuration-space a pproach to motion planning), computer vision (interpretation-tree approach to object recognition), machine learning (multiple-instance learning), medical imaging (computer-assisted surgery) and computational chemistry (drug activity prediction and protein structure determination from NMR & X-ray data). His current research is aimed at integrating task, motion and decision-theoretic planning for robotic manipulation.

Daniele Magazzeni: Temporal Reasoning in Task-Motion Planning

Abstract: Consider the scenario where a team of robots has to coordinate their movements and their task allocations in order to complete a mission as soon as possible. Different tasks have different durations and each tasks must be performed within one of the available time windows. Such a challenging scenario requires temporal reasoning in addition to task-motion planning. Recently, much progress has been made in AI Temporal Planning, which provides an elegant and efficient approach for optimisation in temporal domains. In this talk I will present the integration of temporal planning in the task- motion planning framework, and discuss how a synergy between these areas is very promising.

Bio: Daniele Magazzeni is Lecturer in Artificial Intelligence at King’s College London. His research explores the links between Artificial Intelligence and Verification, with a particular focus on planning for robotics and complex systems. Magazzeni is an elected member of ICAPS Executive Council. He serves on the organising and program committee of major AI conferences. He is Editor-in-Chief of AI Communications. He was Conference Chair of ICAPS 2016, is Workshop Chair of IJCAI 2017, and will be chair of the special track on Robotics at ICAPS-18. He is co-investigator in UK and EU projects. Daniele is scientific advisor and has collaborations and consultancy projects with a number of companies and organisations.

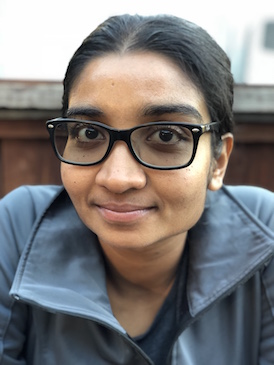

Vasu Raman

Vasu Raman is a roboticist at Zoox, Inc. Previously, she was a Senior Scientist at the the United Technologies Research Center in Berkeley, CA, and a Computing and Mathematical Sciences postdoctoral scholar at the California Institute of Technology, where she worked with Richard Murray and Sanjit Seshia.

Her research explores algorithmic methods for designing and controlling autonomous systems, guaranteeing correctness with respect to formal specifications. She focuses on safety-critical systems performing complex tasks in adversarial environments, interacting with a variety of agents. She draws on technical and creative perspectives from formal methods for software verification, hybrid systems, robotics, control and game theory.

She earned a Ph.D. in 2013 from the Department of Computer Science at Cornell University, where she was advised by Hadas Kress-Gazit and affiliated with the Autonomous Systems Lab and the LTLMoP Project. Her dissertation addressed challenges in the synthesis of provably correct control for robotics. She also holds a B.A. in Computer Science and Mathematics from Wellesley College.

Jan Rosell: Manipulation planning for robot co-workers

Abstract: Service and industrial robotics can expand their perspectives by the upcomming of dexterous and mobile collaborative robots, that may act as robot helpers at home or as robot co-wokers at the workplace. To move and to execute manipulation tasks autonomously in human environments poses several planning requirements, both at motion level (such as moving in a human-like manner, or allowing interactions with the objects in the environment) and at task level (such as to guarantee the feasibility of plans regarding possible object placements and grasps, achievable and collision-free inverse kinematic solutions, and alternative paths, as well as to guarantee the adaptability or robustness of the plans to changing and uncertain environments). In this talk I will present different perspectives of integration of both planning levels to fit different manipulation task requirements.

Bio: Jan Rosell received the BS degree in Telecomunication Engineering and the PhD degree in Advanced Automation and Robotics from the Universitat Politècnica de Catalunya (UPC), Barcelona, Spain, in 1989 and 1998, respectively. He joined the Institute of Industrial and Control Engineering (IOC) in 1992 where he has developed research activities in robotics. He has been involved in teaching activities in Automatic Control and Robotics as Assistant Professor since 1996 and as Associate Professor since 2001. His current technical areas include task and motion planning, mobile manipulation, and robot co-workers.

Nicholas Roy: Abstraction, Optimality and Symbols

Abstract: There is a tension in planning between approaches that use abstraction to accelerate planning, but give up on optimality (and sometimes completeness), and approaches that guarantee optimality and completeness, but give up on scaling up to interesting problems. I will talk about recent work in my group in developing a planning approach using a specific form of abstraction that both ensures completeness (and optimality, if that’s your thing), and also accelerates planning in the way that abstraction is intended to do. I will also do my best to connect this work to the general idea of symbolic representations in robotics.

Bio: Nicholas Roy is the Bisplinghoff Professor in the Department of Aeronautics & Astronautics at the Massachusetts Institute of Technology and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT. He received his Ph. D. in Robotics from Carnegie Mellon University in 2003. His research interests include unmanned aerial vehicles, autonomous systems, human-computer interaction, decision-making under uncertainty and machine learning.

Posters

-

Nicola Castaman, Elisa Tosello, and Enrico Pagello. Conditional Task and Motion Planning through an Effort-based Approach

-

Jorge A. Delgado, Edgar Granados , Eduardo D. Martínez, Karen L. Poblete, Marco Morales. Integrated Task and Motion Planning for Instances of Autonomous Driving

-

Caelan Reed Garrett, Tomás Lozano-Pérez, and Leslie Pack Kaelbling. STRIPS Planning in Infinite Domains.

-

David Paulius, Ahmad Babaeian Jelodar, and Yu Sun. David Paulius, Ahmad Babaeian Jelodar, and Yu Sun.

-

Chris Paxton, Vasumathi Raman, Gregory D. Hager, Marin Kobilarov. Combining Neural Networks and Tree Search for Task and Motion Planning in Challenging Environments

-

Camille Phiquepal and Marc Toussaint. Combined Task and Motion Planning under Partial Observability: An Optimization-Based Approach

-

William Vega-Brown and Nicholas Roy. Anchoring abstractions for near-optimal task and motion planning